The recap for this Dreamforce is going to come across as a bit of a photo journal as I go back through my phones timeline to piece back together what I got up to. Somehow, even on my 5th time attending this conference things still went past at a frantic pace.

Table of Contents

- Preconference

- Day One

- My Presentation

- Main Keynote

- Day Two

- The Lightning Platform Roadmap

- Dreamfest

- Day Three

- Developer Keynote

- Meet the Developers

- True to the Core

- Day Four

- Mulesoft

- Deployment Fish

Preconference

A visit to the Salesforce Tower for the Tooling Partner meeting. Then a quick visit to the Salesforce Park (the day before it was closed due to structural damage).

Day One

Testing Lightning Flow Automations

- Usage of

Test.loadData()to prep records for testing from a static resource. - Starting auto launched flows from a testing context.

- In Winter'19 - Use the

FlowTestCoverageandFlowElementTestCoveragefrom the Tooling API to get coverage details for flows. See Track Process and Flow Test Coverage

DX Super Session (Video)

New scratch org snapshots. In pre-release it is using the 0TT key prefix. Which is a Trailforce Template.#DF18 pic.twitter.com/d7EqiEO3yA

— Daniel Ballinger 🦈 (@FishOfPrey) September 25, 2018

New scratch org "snapshots" to use as the starting point for creating additional scratch orgs. The snapshot can be used to pre-configure the scratch org in a known state. E.g. with the dependent packages installed and test data loaded. They are currently using the 0TT Trailforce Template keyprefix. Note that any orgPreferences are additive over what is already present in the snapshot.

FORCE_SHOW_SPINNER and FORCE_SPINNER_DELAY environment options that can make automating the CLI easier. They prevent the spinner coming back on the standard output.

Naming for unlocked package versions.

- Source for the demo - https://github.com/afawcett/df18-dx-demo

My Presentation

I've done a whole separate blog post to cover my talk - Dreamforce 2018 Presentation - Understand your Org shape via visualization of Metadata Component Dependencies.

Main keynote

It’s Keynote time! #DF18 #MVP Thanks @hollygfirestone @lexpisani & team for herding us into our seats 😍 pic.twitter.com/Onh6W3bDIF

— Kristi Campbell Guzman (@KristiForce) September 25, 2018

Demo's of Einstein voice to convert speech, to text, to actions within Salesforce.

Einstein Voice is FREE!!!! Control Salesforce using your voice!!

— David K. Liu (@dvdkliu) September 25, 2018

Customer 360 - Create a canonical view of the customer across Marketing Cloud, Commerce Cloud, and Service Cloud.

Oh. I need one of those for a current project. Where can I get a #df18 Customer 360 balloon. pic.twitter.com/B56R3GCu9r

— Daniel Ballinger 🦈 (@FishOfPrey) September 25, 2018

MVP Party

This year it was at the Tonga Room & Hurricane Bar. The band on the boat was 👌.

Day Two

The Lightning Platform Roadmap - Part I and Part II

Changes coming to Apex from the roadmap #DF18 session. Yay for FLS/CRUD enforcement getting better native support. pic.twitter.com/Eyp6VVwXqH

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

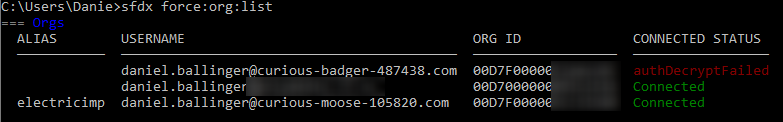

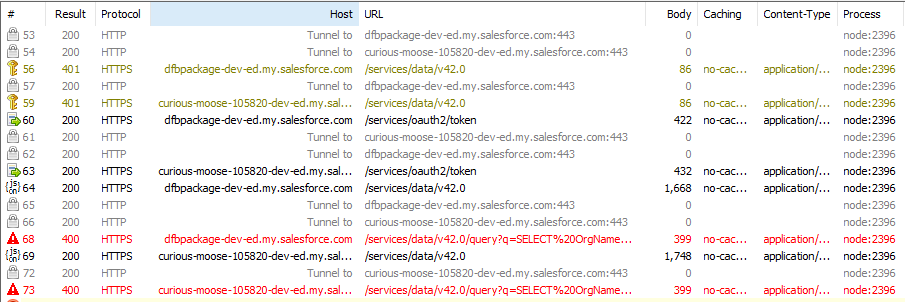

Support for non scratch org dev from the CLI. Plus pre and post command hooks. #DF18 pic.twitter.com/TflpFCzmKU

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

Apex extract method and other refactoring support. Preview of an entirely web based IDE. #DF18 pic.twitter.com/4rgQrDOVMF

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

Sandbox cloning. Targeting both a sandbox and scratch of to a specific release version. #DF18 pic.twitter.com/B35dMvA12e

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

The ability to create a snapshot of a Scratch org to use as a template for creating new scratch org. Useful to get all the pre setup and data load done.

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

2nd generation packages for managed packages, along with a migration path. #DF18 pic.twitter.com/fnVUNFzOFK

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

Integration roadmap via @extraidea at #DF18 pic.twitter.com/K3cCTDi4iq

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

Day Three

Developer Keynote

Lightning Developer Pro Sandboxes - Spin up faster than a traditional Sandbox. Approx 5 minutes to activate (versus a few hours to even days).

Another example of a named package version.

Using @MuleSoft anypoint to aggregate multiple APIs into a single endpoint at the #DF18 dev keynote. pic.twitter.com/ems78qtGXS

— Daniel Ballinger 🦈 (@FishOfPrey) September 27, 2018

Spring 19 roadmap from the @SalesforceDevs #DF18 keynote pic.twitter.com/KVGoIP8nqN

— Daniel Ballinger 🦈 (@FishOfPrey) September 27, 2018

True to the Core

Lots of coverage of how Salesforce will work towards reinvigorating the idea exchange to handle the current scale. In particular, limiting each users ability to vote of every possible idea. Forcing them to focus on what is most important to them. Salesforce will then commit to working on the highest rated ideas.

So everyone who saw True to the Core at @Dreamforce today, what do you think of our IdeaExchange plan? Send me feedback! CC @parkerharris @jennyst0 @mike945778

— Bret Taylor (@btaylor) September 27, 2018

Everything that's Awesome with Apex

(Slides)

Roadmap for Spring '19 and Summer '19

- Exposing the Org Limit details in Apex. E.g. Daily Asysnc Apex executions.

- Support for creating Scratch Orgs with access to Platform Cache.

- Support for "rename symbol" refactoring in Apex via the Apex Language Services, and by extension Visual Studio Code. Longer term, this may expand to implementing interfaces and extracting variables.

- Improved support for enforcing Field Level Security (FLS) and Create,Read,Update,Delete (CRUD) in Apex. Goal is to reduce the amount of processing that was historically required to do this. It's particularly important for those publishing on the App Exchange. https://twitter.com/FishOfPrey/status/1044978778684841984

Quip Party

The August Hall was a great venue. Including a bowling alley and mini arcade downstairs.

Some bowling with @shunkosa and @jan_vdv at the @Quip party before Macklemore comes on. pic.twitter.com/dAyWjbutjj

— Daniel Ballinger 🦈 (@FishOfPrey) September 28, 2018

Macklemore with special guest @SteveMoForce pic.twitter.com/z7P5SibBCZ

— Daniel Ballinger 🦈 (@FishOfPrey) September 28, 2018

Day 4

MuleSoft

Taking a RAML definition in @MuleSoft anypoint and creating a mock web service. Outputs an endpoint URL to call. Can also convert definition to the Open API format. #DF18 pic.twitter.com/XPzo7uuPLT

— Daniel Ballinger 🦈 (@FishOfPrey) September 28, 2018

Deployment Fish Bottle Openers

I said I'd create a bespoke @deploymentfish bottle opener if @SalesforceDevs delivered the switch statement for Apex last year at #DF17. They delivered, so I did too at #DF18. Thanks @slettehaugh

— Daniel Ballinger 🦈 (@FishOfPrey) September 26, 2018

,@ca_peterson, and team. https://t.co/OdFH505GMZ https://t.co/9ulWwlKL2M

Highlights of @Dreamforce day 1 included meeting @FishOfPrey and getting my own deployment fish.

— Carl Brundage (@carlbrundage) September 26, 2018

What were your top #DF18 moments? pic.twitter.com/CdYh1DbxZJ

TIL that @deploymentfish are UV sensitive. pic.twitter.com/0HZQYjHW3m

— Daniel Ballinger 🦈 (@FishOfPrey) September 27, 2018

Forgot this in my shirt pocket. TSA initially thought it was some kind of blade or razor. Another reason I will always treasure my #DeploymentFish. Thanks, @FishOfPrey! pic.twitter.com/INHcjkU3za

— peter chittum (@pchittum) September 29, 2018

To catch up on

- Be An Efficient Salesforce Developer with VS Code (Video)

- Change Data Capture